Hallucinations are a cost center. Every inaccurate or fabricated output from an unstructured AI model incurs a direct financial penalty in wasted labor, eroded trust, and potential compliance violations.

Blog

The Cost of Hallucinations in Unstructured AI Outputs

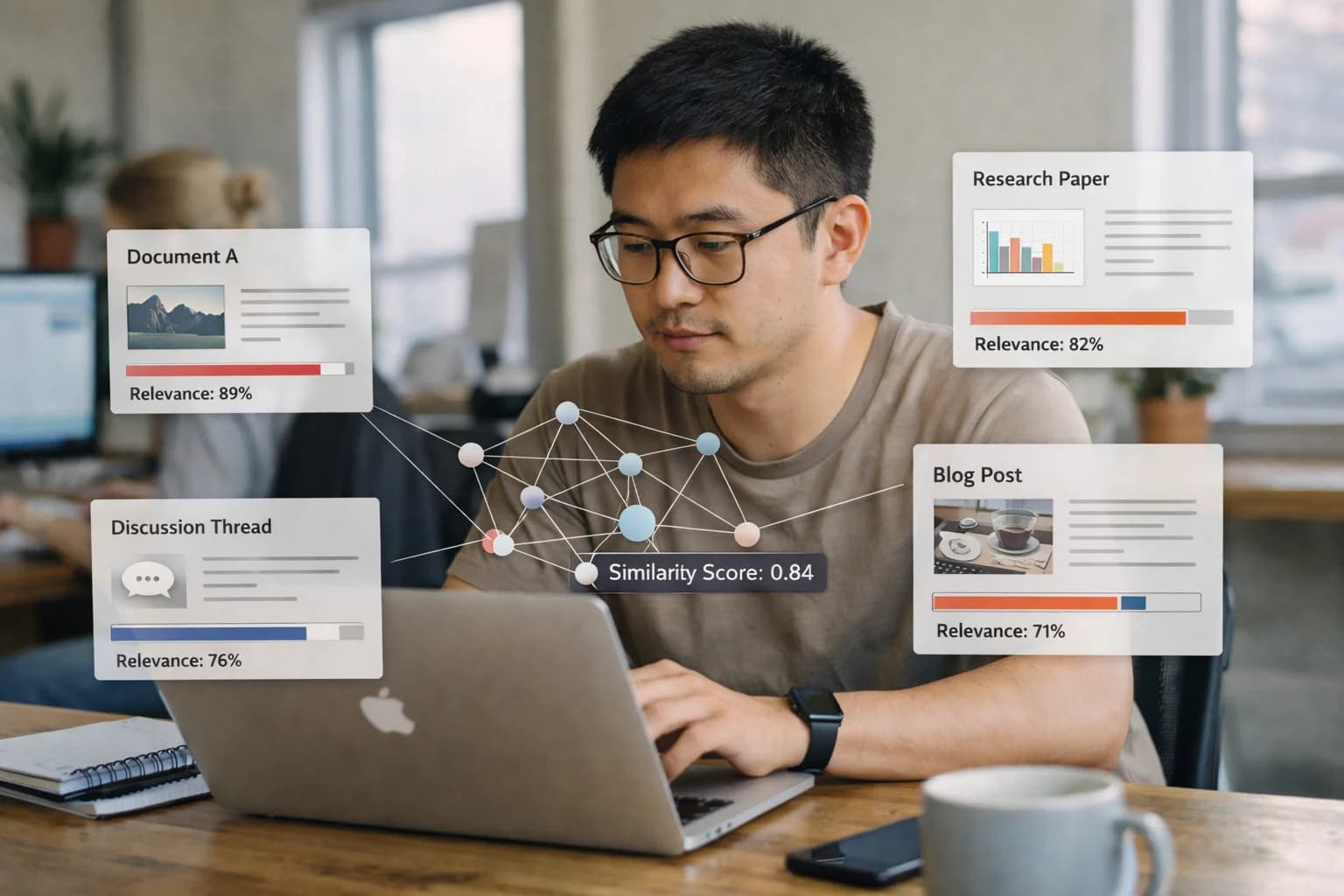

When AI generates content without a grounding semantic layer, the resulting inaccuracies and fabrications incur direct costs in credibility, compliance, and rework. This analysis breaks down the tangible financial and operational impact of unstructured AI outputs and the strategic imperative of context engineering.