AI agents bypass websites entirely, ingesting structured data from APIs and knowledge graphs to make decisions. Your HTML is noise. The primary interface for agentic commerce is a machine-readable fact base, not a homepage.

Blog

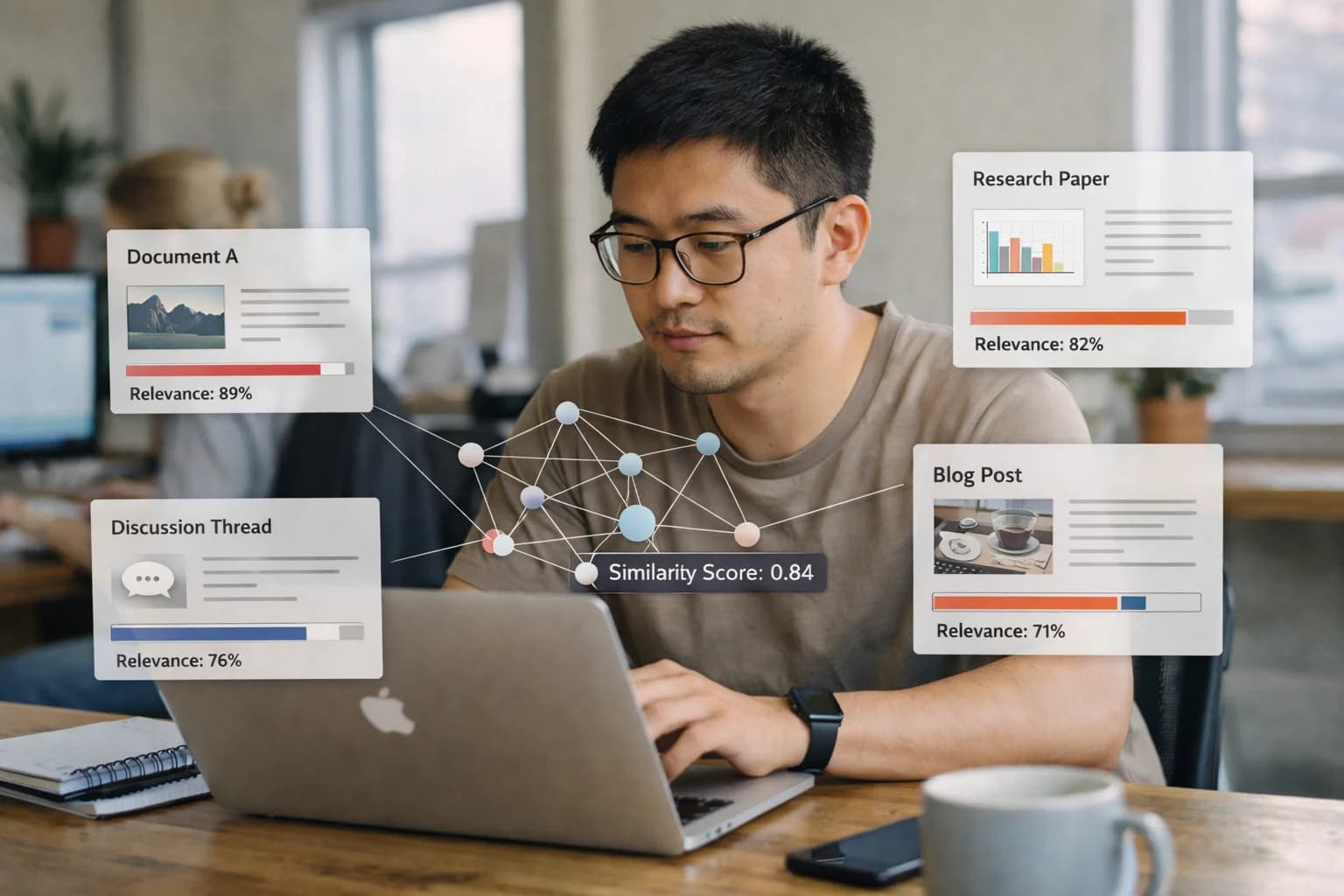

Why Semantic Enrichment is the Key to AI Agent Discovery

In the age of agentic commerce, your product's discoverability depends on how well AI agents can understand its context. Semantic enrichment is the process of connecting your data to broader ontologies, transforming isolated facts into machine-interpretable knowledge. This is the foundation for zero-click visibility in AI-driven answer engines.